It's That Time Again

You can feel it in the air around mid-November. Or late March. Or whenever your company's fiscal calendar dictates that it's time to perform the ancient corporate sacrament known as "Performance Review." The emails start arriving. HR sends calendar holds. Your manager's calendar suddenly fills with mysterious blocks labeled "calibration." A shared document appears in your inbox - a self-assessment template with questions like "Describe your key accomplishments this period" and "Rate yourself on a scale of 1-5 across the following competencies."

And somewhere deep in your gut, you feel it: the familiar mix of dread, resignation, and faint absurdity. Not because you're afraid of honest feedback. But because you know - from years of lived experience - that what's about to happen has almost nothing to do with your actual performance, your real development, or any meaningful conversation about your work.

It's performance review season. Managers will spend weeks writing reviews. Employees will craft carefully worded self-assessments. HR will send three rounds of reminders with escalating urgency. Calibration sessions will consume entire days - sometimes weeks - of leadership bandwidth. At the end of it all, ratings will be assigned, a number will be attached to a person, conversations will happen behind closed doors and then, awkwardly, behind open ones.

Two weeks later, everyone will go back to doing exactly what they were doing before. The review changed nothing. But it consumed hundreds - sometimes thousands - of collective hours. The machine ran perfectly. It just didn't produce anything.

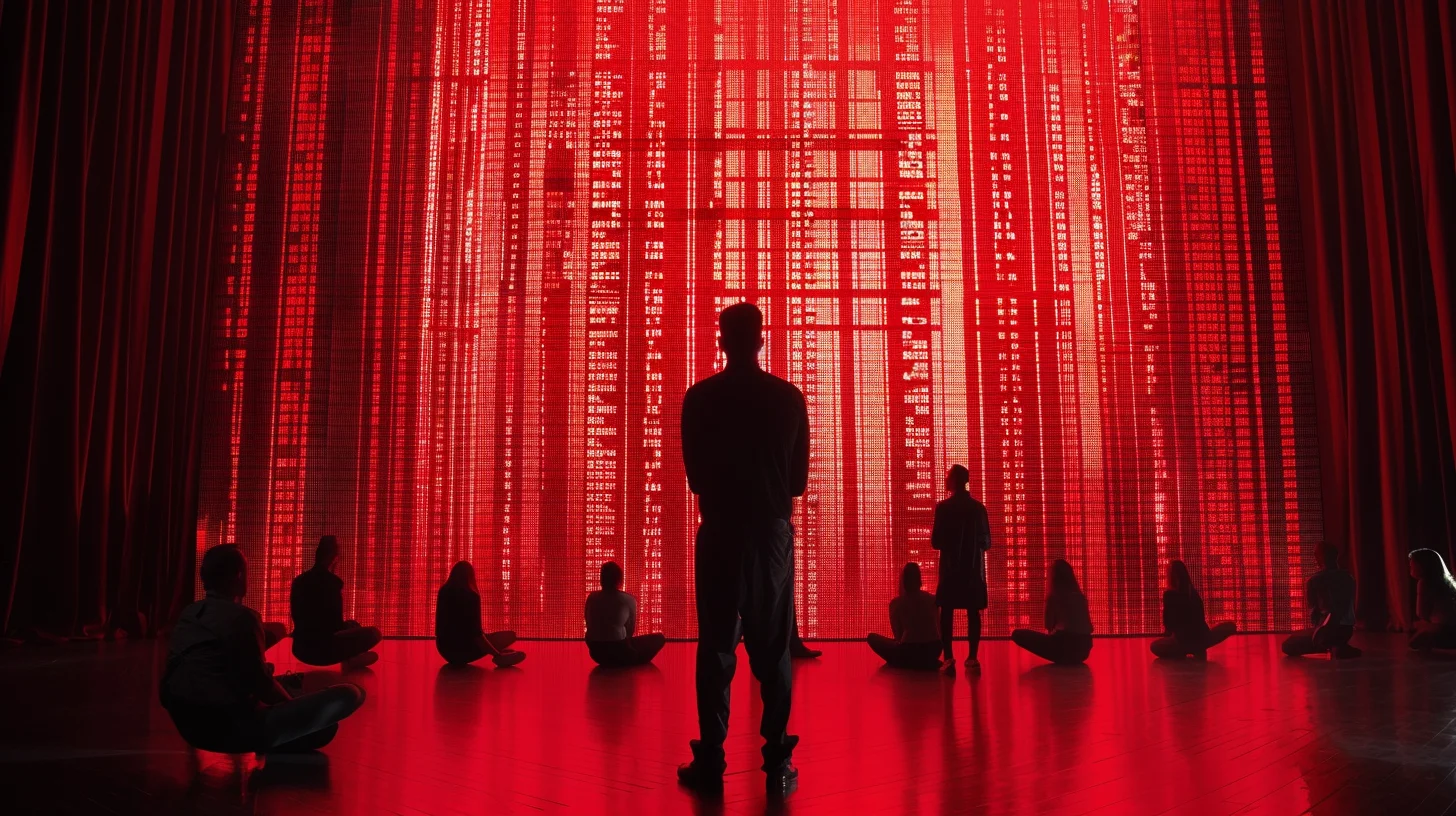

Performance review in most organizations isn't a tool for improving performance. It's a ritual - a ceremonial act that the organization performs to maintain its belief in its own rationality. The spreadsheets, the scales, the models, the forced distributions - these are symbols of a fair system, not fairness itself.

This is System Signal #27. And it's one of the most expensive organizational dysfunctions in existence - not because the process is poorly designed (though it usually is), but because it creates the illusion of people development while actively preventing it.

The Mechanics of the Ritual

To understand why performance reviews fail, you need to look at the specific mechanisms that corrupt the process. These aren't edge cases or signs of a "bad implementation." They're structural features of annual review systems - patterns so consistent across industries, geographies, and company sizes that they should be considered laws of organizational behavior.

The review nominally covers twelve months. In practice, it evaluates the last six weeks. Everything before that is a blur - both for the manager writing the review and the employee filling out the self-assessment. That critical project you delivered in February? The crisis you resolved in April? The mentoring you did all summer? It's all been overwritten by whatever happened in October.

This isn't laziness. It's how human memory works. We are terrible at accurate recall over long time periods, especially for nuanced, multi-dimensional information like "how well did this person perform across a dozen competencies over the past year?" No one keeps a running log. No one goes back through Jira tickets from Q1. The review becomes a snapshot disguised as a panorama - a Polaroid pretending to be a documentary.

After managers write their reviews, something magical happens: the calibration session. In theory, this is where leaders align on consistent standards across teams. In practice, it's a political negotiation where ratings are adjusted based on budget constraints, headcount plans, and "distribution requirements" rather than actual performance.

Here's how it actually works. HR or the VP announces that no more than 15% of the organization can receive the highest rating. Maybe 10%. There's a budget attached to that top tier - a pool of bonus dollars or equity grants. Now the managers sit in a room and horse-trade. "I'll drop Sarah to 'meets expectations' if you drop Michael." "We can't give both of them 'exceeds' - take it up with whoever controls the compensation pool." The rating doesn't describe what the person did. It describes what the budget allows the company to acknowledge.

The employee never sees this. They just get a rating and a explanation that sounds like feedback but is actually post-hoc rationalization of a budgetary decision. The manager who fought for them in the room now has to deliver a rating they may not even agree with, wearing a face that suggests they do.

Most managers give most people "meets expectations." This isn't because most people are mediocre. It's because the rating system creates perverse incentives at both ends of the scale.

Rating someone low requires confrontation. It means difficult conversations, potential HR involvement, documentation requirements, and the risk that the employee will disengage or leave - creating a vacancy the manager then has to fill. It's emotionally exhausting and politically risky. Most managers avoid it unless the situation is truly untenable.

Rating someone high requires justification. It means defending the rating in calibration, competing with other managers for limited "exceeds" slots, and setting expectations that you'll deliver similar ratings in future cycles. It also creates comparison problems: if you give one person "exceeds," everyone else on the team wonders why they didn't get it too.

The path of least resistance? "Meets expectations" for everyone. The rating that requires no defense, triggers no confrontation, and maintains the comfortable fiction that the team is a uniform block of adequate performers. It's the organizational equivalent of a shrug.

Employees write aspirational self-reviews. Managers write diplomatic ones. Neither reflects reality - and both parties know it.

The self-assessment is a peculiar document. You're asked to objectively evaluate yourself while knowing that this document will influence your compensation. The rational response is to present the most favorable version of your work without tipping into obvious self-promotion. So you carefully frame every project as a success, every challenge as an opportunity you seized, every mistake as a "learning experience." You use words like "drove," "led," "spearheaded," and "transformed." Your self-assessment reads like a LinkedIn post about yourself.

Meanwhile, your manager is writing their version. They know your actual performance - the good, the mediocre, the problematic. But they also know they'll have to sit across from you and read this aloud. So they soften the edges. "Could improve stakeholder communication" becomes "Opportunity to further develop cross-functional alignment skills." "Missed three deadlines" becomes "Would benefit from enhanced project management rigor." The review is written in a dialect of English that exists nowhere else in human communication - a language designed to say things without actually saying them.

Perhaps the most destructive mechanic: the performance review trains organizations to store feedback instead of delivering it. Something goes wrong in March. The manager notices. Instead of addressing it in real-time, they file it away mentally - "I'll bring this up in the review." By November, the context is lost. The employee doesn't remember the specifics. The manager's memory has been distorted by eight months of subsequent events. Feedback that would have been actionable in March arrives in November as a vague, context-free criticism that the employee has no idea what to do with.

The same happens with positive feedback. A brilliant piece of work in May gets acknowledged eight months later, stripped of all the emotional resonance and motivational power it would have carried if delivered when it mattered. The review becomes a time capsule of reactions that expired months ago.

The Symptoms

These mechanics produce a consistent set of downstream effects. If your organization exhibits more than two of the following, your performance review isn't a development tool - it's an expensive form of organizational theater.

Top performers and average performers get the same rating. "Exceeds expectations" is rare - not because few people exceed expectations, but because the rating costs the compensation budget. So the engineer who shipped three major features, mentored two juniors, and fixed a critical production incident gets the same "meets expectations" as the one who showed up, did the minimum, and left on time. Both of them know this. One of them starts updating their resume.

The most common post-review sentiment in any organization: "That was a waste of time." Not angry. Not hurt. Just... empty. The ritual consumed days of collective effort and produced nothing actionable. Everyone goes back to their desks and continues exactly as before.

Development plans are filed and forgotten. Every review cycle produces a stack of "Individual Development Plans" - documents outlining goals, training needs, and growth areas. In theory, these guide the employee's development over the next cycle. In practice, neither party looks at them again until the next review season, when they're hastily referenced to check whether any boxes can be plausibly ticked before creating a new set of equally aspirational and equally ignored goals.

High performers leave. This is the cruelest consequence. The people who care most about growth, recognition, and impact are the ones most damaged by a system that fails to differentiate. When a top performer gets the same rating, the same generic feedback, and the same 3% raise as everyone else, the message is clear: this organization doesn't see you. It doesn't matter how hard you work or how much you contribute - the system has already decided that everyone is the same. High performers don't argue with this. They leave. Quietly. Usually to a company that promises to be "different" - and often is, at least for a while.

Low performers stay. The flip side is equally damaging. Because the system avoids confrontation, underperformers are never clearly told they're underperforming. They get "meets expectations" - the same rating as everyone else. They have no reason to change because the system has told them, implicitly, that everything is fine. The manager knows it's not fine. The team knows it's not fine. HR probably knows it's not fine. But the review says "meets expectations," and that's what the record shows. The problem persists not because no one sees it, but because the review system provides a formal mechanism for pretending it doesn't exist.

The review itself becomes a source of anxiety rather than growth. Employees don't approach the review thinking "I'm excited to learn how I can improve." They approach it thinking "I hope I don't get screwed." The entire emotional register is defensive. People rehearse counter-arguments. They save emails that prove their contributions. They document their wins like a lawyer building a case. This is not development. This is litigation preparation. The review hasn't created a growth conversation - it's created an adversarial proceeding where both parties are trying to survive the encounter with minimal damage.

When a feedback system makes people feel they need to lawyer up, the system has stopped serving development and started serving self-preservation. You're not reviewing performance anymore - you're conducting performance trials.

Why Companies Keep Doing It

If performance reviews are this broken, why does every large organization still run them? Because they serve real functions - just not the ones they claim to serve.

Compliance. Public companies, regulated industries, and organizations above a certain size face legal and regulatory requirements around performance documentation. They need records that show employees were evaluated, that standards were applied, that decisions about compensation and termination were based on documented criteria. The performance review satisfies this requirement. It creates a paper trail. Whether that paper trail reflects reality is secondary to its existence.

Legal protection. When an employee is terminated and files a wrongful termination suit, the first thing the company's lawyers look for is the performance review history. "We documented performance issues over three review cycles." This is why HR insists on the process even when everyone knows it's theater - because in a courtroom, theater with documentation beats reality without it. The review isn't for the employee. It's for the company's legal defense file.

The fairness illusion. Organizations crave the perception of fairness, even - especially - when actual fairness is impossible. The performance review provides this. "Everyone goes through the same process." Same form. Same rating scale. Same calibration. Same timeline. The uniformity of the process substitutes for the uniformity of the outcome. It doesn't matter that the process is riddled with bias, that calibrations are political, that ratings are constrained by budgets. What matters is that the process looks fair. It's a ritual of procedural justice - the organizational equivalent of a rain dance. It may not bring rain, but at least everyone participated equally in the dance.

Tradition and inertia. "We've always done it this way." This is perhaps the most honest reason, and the hardest to overcome. Performance reviews are embedded in the organizational operating system. Compensation cycles depend on them. Promotion processes reference them. Manager training includes them. HRIS systems are built around them. Removing the performance review would require redesigning half a dozen interconnected processes - and no single person or team has the authority, energy, or political capital to do that. So the ritual continues, powered by the organizational momentum of "this is just how things work."

Management avoidance. There's a fifth reason that organizations rarely admit: the annual review gives managers permission to not manage for the other eleven months. Why have an uncomfortable conversation now when you can defer it to the review? Why address a performance issue in real-time when there's a formal process for that in Q4? The review becomes a procrastination mechanism - a scheduled future date that absorbs all the difficult conversations that should be happening continuously. "I'll bring it up in the review" is the manager's equivalent of "I'll start the diet on Monday."

The annual performance review doesn't just fail to develop people - it actively discourages the continuous, real-time management behavior that actually does. It replaces a hundred small, timely, contextual conversations with one large, delayed, generic one. And then we wonder why nothing changes.

The Antidote

Fixing performance reviews isn't about tweaking the form or changing the rating scale from five points to four. It's about recognizing that the traditional review conflates several distinct functions into a single process - and then untangling them.

Separate compensation from feedback. This is the single most impactful change an organization can make. Right now, the performance review tries to be two things at once: a development conversation ("here's how you can grow") and a compensation event ("here's what you're getting paid"). These two purposes are fundamentally incompatible. When money is on the table, no one is genuinely open to feedback. The employee isn't thinking about growth - they're thinking about whether this conversation ends with a 3% raise or a 5% one. The manager isn't thinking about development - they're thinking about how to deliver the bad news about the calibration result.

Split them. Have one conversation about compensation - transparent, direct, with clear criteria and no pretense that it's about "development." And have separate, ongoing conversations about growth - informal, frequent, low-stakes, and genuinely focused on helping the person get better at their work. When you stop pretending that a compensation decision is a coaching session, both conversations get dramatically more honest.

Make feedback continuous, not batched. Feedback has a half-life. The closer it is to the event, the more useful it is. A code review comment the same day is actionable. A note about "communication style" eight months later is not. The goal isn't to eliminate formal check-ins - it's to make them supplements to an ongoing conversation, not substitutes for one.

This doesn't require a new tool or a new process. It requires a cultural norm: if you have feedback, deliver it now. Not in a scheduled meeting. Not in a Google Doc. Not in the review. Now. In the hallway. On Slack. After the standup. The medium doesn't matter. The timing does.

Kill the rating scale. Replace numeric ratings with clear, specific narratives: "What you did" and "What to do differently." A number tells you nothing. "3 out of 5 on communication" - what does that mean? What specifically should you do more of? Less of? A narrative that says "Your technical documentation is exceptional, but you tend to go silent during cross-team disagreements, which leaves your team without advocacy in those moments" - that's actionable. That's something a person can actually work with.

Yes, narratives are harder to write. Yes, they're harder to calibrate. Yes, they don't fit neatly into a compensation formula. That's the point. The ease of numeric ratings is precisely what makes them useless. They compress the irreducible complexity of human performance into a single digit and then pretend that digit means something.

If you must have a formal review, make it about the system. Shift the review conversation from "How did you perform?" to "What enabled your performance, and what blocked it?" This isn't just a semantic change - it fundamentally reframes accountability. Instead of putting the person on trial, you put the environment on trial. Did they have the tools they needed? Were the goals clear? Did organizational decisions help or hinder their work? Was the team structured effectively?

This approach does two things. First, it surfaces systemic issues that are invisible in individual reviews - issues like unclear strategy, poor tooling, excessive meeting load, or organizational friction that slows everyone down. Second, it shifts the emotional register of the conversation from defensive to diagnostic. People are much more willing to engage honestly when the question is "what can we fix about the system?" rather than "what's wrong with you?"

Create accountability for the conversation, not the form. If you measure managers on whether they submitted the review form on time, you'll get forms submitted on time. If you measure managers on whether their team members can articulate their development priorities and feel supported in pursuing them, you'll get actual development. The metric shapes the behavior. Right now, the metric is "completion rate of review forms." Change the metric, and you change the game.

What Real Development Looks Like

The organizations that are genuinely good at developing people don't rely on annual reviews. They do something much simpler and much harder: they build a culture where feedback is a daily practice, not an annual event.

In these organizations, a manager who waits until the annual review to deliver critical feedback would be seen as failing at their job - not fulfilling it. The expectation is that you address things as they arise. Not perfectly. Not with a script. Not with a rating scale. Just honestly, directly, and in real-time.

This is uncomfortable. It requires managers who are willing to have hard conversations regularly, not just once a year when the process gives them permission. It requires employees who are open to hearing feedback when it's fresh and direct, not softened by months of delay and corporate euphemism. It requires leaders who model vulnerability - who publicly acknowledge their own mistakes and growth areas instead of performing omniscience.

It's also the only thing that actually works. People don't develop in annual increments. They develop in daily ones - through small corrections, timely recognition, honest conversations, and the knowledge that someone is paying attention to their work and cares enough to tell them the truth about it. Not once a year. Every day.

The best feedback system in the world is a manager who says "Can we talk about how that meeting went?" the same afternoon it happens. No form required. No rating scale needed. Just a human being paying attention and being honest about what they see.

Companies like Netflix, Bridgewater, and certain high-performing tech organizations have moved toward continuous feedback models - and the results speak for themselves. Not because they found the perfect form or the ideal rating scale, but because they stopped confusing the bureaucracy of evaluation with the practice of development. They separated the two and invested in the one that actually produces results.

The irony is that most organizations already know this. Ask any manager: "When did you have the most impactful development conversation of your career?" Almost no one says "during my annual performance review." They describe a moment - a specific conversation with a specific person at a specific time when someone told them something real and it changed how they worked. Those moments happened organically. They happened in real-time. They happened because someone cared enough to speak up when it mattered.

That's what development looks like. Everything else is paperwork.

The Organizational Cost No One Calculates

Let's do the math that nobody does. Take a company of 1,000 employees. Each employee spends an average of 3-4 hours on their self-assessment. Each manager spends 2-3 hours per direct report writing reviews - with an average of 6 reports, that's 12-18 hours per manager. Calibration sessions consume 8-16 hours of leadership time across multiple rounds. HR spends weeks administering the process - sending reminders, chasing stragglers, compiling data, preparing reports.

Conservative estimate: 5,000 to 8,000 person-hours per cycle. For a company that runs this semi-annually, that's 10,000 to 16,000 hours per year. At an average fully-loaded cost of $75 per hour, you're looking at $750,000 to $1.2 million annually - spent on a process that, by the most generous assessment, changes nothing about how anyone actually works.

But the real cost isn't in hours. It's in opportunity cost. Those are hours that managers could have spent actually managing - coaching their teams, removing obstacles, having real conversations. Those are hours that employees could have spent doing their best work instead of crafting narratives about their best work. Those are hours that leaders could have spent on strategy instead of haggling over rating distributions.

And then there's the hidden cost: the talent that leaves because the system doesn't see them. Replacing a high performer costs 1.5-2x their annual salary. If your review system drives even two or three top performers out the door each year because it failed to differentiate them, you've already exceeded the cost of the entire review process - and you've lost the people who were actually driving results.

it's a post-mortem on a year you can't get back.

The review doesn't measure performance.

It formalizes the organization's need to believe it manages performance.

If you want to develop people, talk to them.

If you want to sort people, use a spreadsheet.

Don't confuse the two.